🎯 Quick Answer

What: The 16 Questions DMAIC Framework is a question-first reframe of Lean Six Sigma. It replaces tool-driven phase work with 16 strategic questions that organize every project from Define through Control.

Why It Matters: Tools-first projects produce artifacts. Questions-first projects produce answers. The difference shows up in sustained results.

How to Apply: Before opening any DMAIC tool, identify which of the 16 questions the team is currently trying to answer. Tools become instruments, not deliverables.

Expected Results: Projects that exit each phase with documented evidence rather than completed templates. Higher rates of result sustainment beyond the close-out meeting.

Most DMAIC projects fail tools-rich and question-poor.

Walk into any Green Belt classroom and the same artifacts appear on the wall. SIPOC diagrams. Fishbone charts. FMEAs. Control plans. Teams treat the toolbox as the methodology. Six months later, the metrics drift back toward where they started, and nobody can explain what the analysis actually surfaced.

The pattern repeats across hundreds of project reviews spanning oil and gas, healthcare, and manufacturing. The projects that delivered durable results were not the ones with the most tools. They were the ones where every team member could state, without consulting the slide deck, exactly what question each tool was answering. That is the shift the 16 Questions DMAIC framework forces.

This article walks through the 16 questions that have structured every project under VRI's federally-registered methodology since 1999. It covers why questions outperform tools, the question set itself, how cross-industry patterns hold up against the framework, and how to start applying it without reorganizing the entire toolkit.

Why Tools-First DMAIC Fails

Tool-driven DMAIC turns improvement work into deliverables-by-checklist. The team produces a SIPOC because the playbook says produce a SIPOC. The fishbone gets built because the module called for a fishbone. Each artifact looks correct on the storyboard slide. Then the project closes, the metrics drift, and the post-mortem cannot reconstruct what the analysis was actually meant to prove.

Belt programs reinforce this pattern unintentionally. Belt programs organize content by tool name. Module 7 covers Statistical Process Control. Module 11 covers Design of Experiments. Module 14 covers Control Charts.

Practitioners exit the program fluent in tools but unsure which question each tool was meant to answer. When real projects start, the muscle memory says reach for the next tool, not pause for the next question.

The pattern shows up the same way across industries. In a major energy company's onshore drilling operation, a non-productive time project produced thirty pages of analysis: cause-and-effect diagrams, Pareto charts, run charts, correlation studies. The team could not articulate, in one sentence, what was actually causing the variance. The artifacts existed. The understanding did not.

A regional medical center ran a similar exercise around emergency department door-to-provider times. The deliverable folder contained every expected template. Six months after close-out, the median wait time had returned to baseline. The team had measured the process. Nobody had answered the question of what the measurement was meant to surface.

A Fortune 500 semiconductor manufacturer ran a yield improvement project with full statistical process control deployment. Charts went up on every line. Out-of-control points triggered investigations. Yield stayed flat. The tools were running. The inquiry behind them was not.

💡 Key Pattern: Tools serve questions. Questions do not serve tools. When the order inverts, projects produce artifacts instead of answers.

The Question-First Reframe

The 16 Questions DMAIC framework reorganizes the methodology around inquiry rather than instrumentation. Each phase becomes a small set of strategic questions. Tools become the means of answering those questions, not the ends in themselves.

Compound questions group related inquiries because the inquiries are interdependent. Question 1, for example, asks who the customers are, what the business case is, and what metrics matter. Those three cannot be separated cleanly.

Customer requirements drive the business case. The business case dictates what to measure. The metrics validate whether the customer was understood. Treating them as one compound question forces the team to reconcile the three together rather than producing three disconnected artifacts.

Evidence-based phase exits anchor the reframe. Every question requires documented evidence to be considered answered. Discussion does not count. Consensus does not count.

A question is closed when the team can show the evidence that closes it. The deliverable proves the question was answered.

Sequential progression forces discipline. A team cannot meaningfully address Question 4 without first answering Questions 1 through 3. Without knowing the customer, the business case, and the metrics, no current-state assessment can be evaluated as good or bad.

Without knowing the major process steps, no measurement strategy can target the right places. The framework prevents teams from skipping ahead to the analysis they want to do before the foundation justifies it.

The behavior change is visible within the first project. Teams stop debating which tool to use. They start debating what the team needs to know. The fishbone-versus-affinity-diagram argument disappears. The new argument becomes whether the root cause hypothesis is supported by data. That is a more productive argument.

The same canonical scenarios that fail tools-first succeed question-first. The drilling non-productive time effort, the door-to-provider improvement, and the semiconductor yield problem each yielded sustained results when teams reorganized around the 16 questions and let tool selection follow the inquiry.

The 16 Questions, Phase by Phase

The 16 questions distribute across the five DMAIC phases as follows. Each entry includes the question, what it surfaces, and the tools commonly recruited to answer it.

DEFINE Phase (Q1–Q3)

Q1: Who are the customers, what is the business case, and what metrics will be captured?

Surfaces customer requirements, financial justification, and measurement strategy together. Tools: Voice of the Customer, project charter, critical-to-quality tree.

Q2: What are the major steps in the process, and does the project need to be scoped tighter?

Surfaces the high-level process and forces a scope reality check before deeper work begins. Tools: SIPOC, process boundary diagram, project scope statement.

Q3: How have improvement opportunities been prioritized, who are the stakeholders and team, and what are the goals and timeline?

Surfaces the project plan and team structure. Tools: prioritization matrix, stakeholder analysis, RACI chart, project timeline.

MEASURE Phase (Q4–Q7)

Q4: What is the current state of the process, and is the process constrained?

Surfaces process flow, value-add versus non-value-add activity, and bottleneck identification. Tools: detailed process map, value stream map, theory of constraints analysis.

Q5: How is the process measured, and is the measurement system precise and accurate?

Surfaces measurement system reliability before any baseline is trusted. Tools: Measurement System Analysis, Gage R&R, Attribute Agreement Analysis.

Q6: What is the baseline performance, what are the specifications, and is the process capable?

Surfaces capability against customer requirements. Tools: capability analysis, Cpk, Ppk, first pass yield.

Q7: What is the Cost of Poor Quality?

Surfaces the financial weight of the current state. Tools: COPQ analysis, quality cost categorization.

ANALYZE Phase (Q8–Q10)

Q8: What waste types are present, and are the primary metrics off-target or showing high variation?

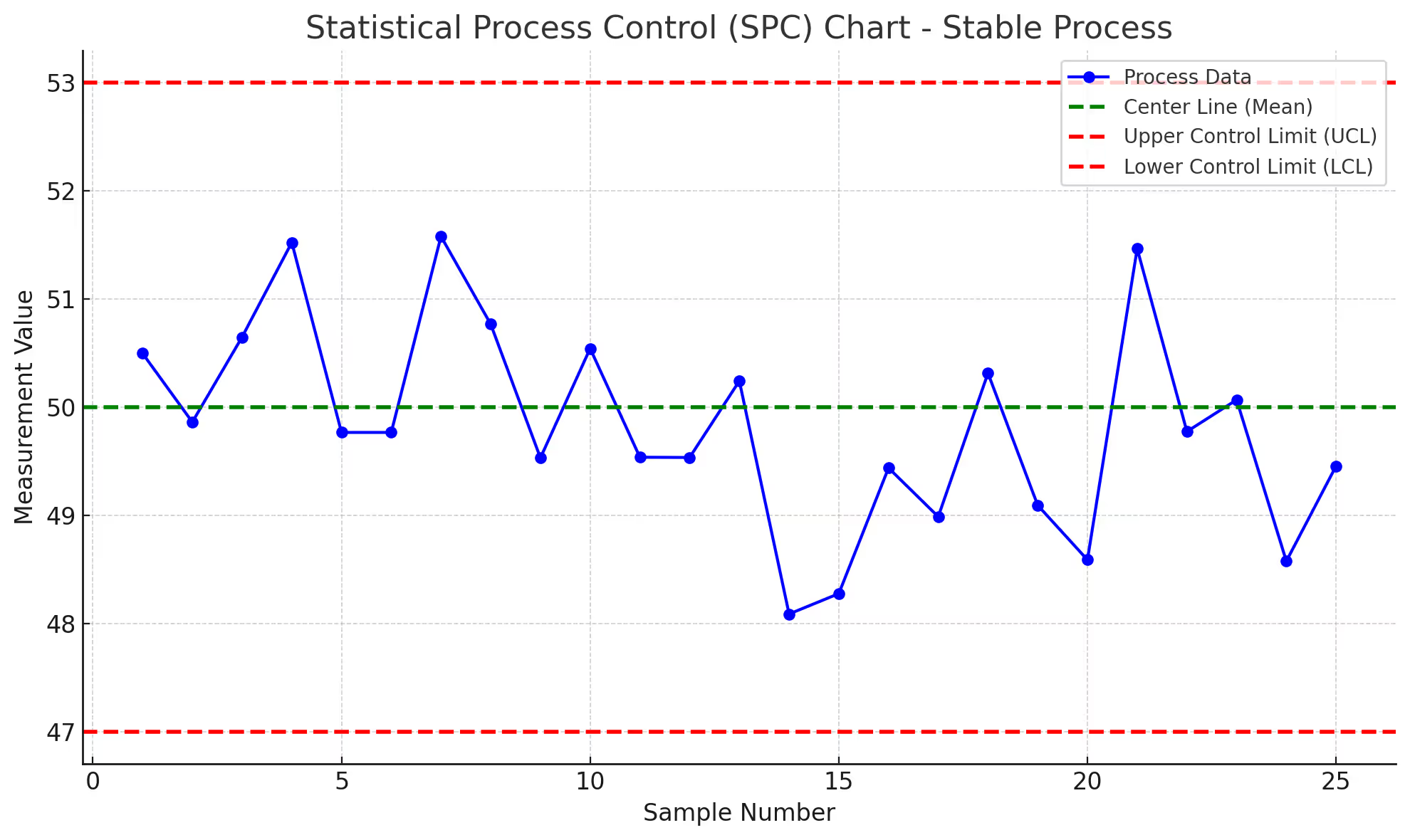

Surfaces waste inventory and variation patterns. Tools: waste walk, Pareto analysis, run charts, control charts.

Q9: What are the sources of the problem and the root causes?

Surfaces validated root causes, not just suspected ones. Tools: 5 Whys, fishbone diagram, FMEA, hypothesis testing, regression analysis.

Q10: What would a benchmark or world-class process look like?

Surfaces the gap between current and best-in-class to set improvement aspiration. Tools: benchmark study, best-practice review, gap analysis.

IMPROVE Phase (Q11–Q13)

Q11: How can the cause of the waste be removed, and how do inputs affect the process and interact?

Surfaces solution design and input-output relationships. Tools: Design of Experiments, brainstorming, solution selection matrix.

Q12: What is the list of improvements, are tests necessary, and what is the implementation risk?

Surfaces the improvement portfolio and risk assessment. Tools: pilot test plan, risk assessment, implementation plan.

Q13: What is the performance of the improved process, and did the team meet the goal?

Surfaces post-improvement results against the project charter. Tools: post-improvement capability analysis, hypothesis testing, before-and-after comparison.

CONTROL Phase (Q14–Q16)

Q14: How will the improvement be sustained?

Surfaces the control plan and ownership structure. Tools: control plan, standard work, visual management, training plan.

Q15: What is the documented benefit from the improved process?

Surfaces hard-dollar and soft-dollar savings with finance validation. Tools: benefits realization, A3 final report, financial validation.

Q16: Has the team been recognized?

Surfaces team recognition as part of project closure, not as an afterthought. Tools: project celebration, formal recognition, lessons learned capture.

Sixteen questions. Five phases. Every project the same skeleton, regardless of the industry or the variation problem on the table.

How the 16 Questions DMAIC Framework Holds Up Across Industries

The same 16 questions apply to onshore drilling operations, surgical units, and semiconductor fabrication lines. The deliverables and the tools change. The inquiries do not.

Question 4, current state, looks different in each industry. In an onshore upstream operation, a current-state map traces the well completion sequence from rig-up through first oil. In a regional medical center, the same question maps emergency department patient flow from arrival through disposition. In a semiconductor fab, current-state work maps the wafer process from incoming material through final test.

Same question. Three different visualizations. Three different teams arriving at the same kind of clarity about how their process actually behaves today.

Question 9, root causes, follows the same pattern. In onshore drilling, root cause work on unplanned non-productive time often pulls in fishbone diagrams and equipment reliability data. In healthcare, FMEA work on medication administration errors surfaces system-level failure modes that no single individual could be blamed for.

In semiconductor manufacturing, Design of Experiments work on yield variance isolates which process inputs actually drive the output. Different tools, identical inquiry: what is causing the gap between current and target?

Healthcare-specific note: clinical quality framing is the right framing for the 16 questions in care settings. Patient Safety Indicators, Vizient quartile rankings, Leapfrog Hospital Safety Grades, CMS Star Ratings, Hospital Value-Based Purchasing scores, HCAHPS results, and Hospital-Acquired Infection rates are the metrics that anchor Question 1 and Question 6 in clinical environments. The framework adapts cleanly because the inquiry holds even when the metric vocabulary shifts.

📊 Cross-Industry Pattern: Practitioners who learn the question-first framework move between industries faster than tool-first practitioners. The questions transfer. The tools do not need to.

How to Start Applying the 16 Questions Framework

For belt-trained practitioners, the entry point is the next project. Before opening any tool, the team writes down which of the 16 questions is currently on the table. Tool selection follows. The discipline reorders the work without requiring any change to the existing toolkit.

For program leaders, the entry point is portfolio review. Pull last year's projects. For each one, ask which question it answered. Projects that cannot map cleanly to a question are typically the projects that did not sustain. The audit surfaces structural gaps in how the program selects and structures work, not just gaps in any single project.

For belt instructors, the entry point is module redesign. Open each module with the question rather than the tool. Module 8 becomes "Question 5: how is the process measured, and is the measurement reliable? Tools to answer this question include MSA, Gage R&R, and Attribute Agreement Analysis." The tool list narrows organically because tools that do not answer the question stop earning a slot in the module.

For practitioners at every level, the entry point is the project log. Organize the log by question rather than by deliverable. Sixteen entries become both audit trail and learning record. New practitioners reading the log learn what each tool answered, not just that the tool was used.

The framework is not a replacement for tools. It is the ordering principle that makes tools earn their place in a project. A control chart with no associated question is decoration. A control chart answering Question 8, applied to a process baselined under Question 6, with capability tested under Question 13, is doing work.

Frequently Asked Questions

What is the 16 Questions DMAIC framework?

The 16 Questions DMAIC framework is a federally-registered Lean Six Sigma methodology that reorganizes the Define, Measure, Analyze, Improve, and Control phases around 16 strategic questions rather than around tool deliverables. Owned by Variance Reduction International and registered with the U.S. Copyright Office under TXu 1-802-923 (1999/2010) and TXu 1-910-433 (2014), the framework has structured every project under VRI's methodology since 1999.

How is question-first DMAIC different from traditional DMAIC?

Traditional DMAIC is typically taught and executed tool-by-tool. Modules cover specific tools. Project storyboards are organized as tool deliverables. Practitioners learn what tools to deploy in each phase but often do not learn what each tool is meant to surface.

Question-first DMAIC inverts the order. The phase work begins with the question. Tool selection follows. Phase exits require documented evidence that the question was answered, not just that the tool was completed. Practitioners trained question-first transfer between industries more easily because the questions are universal even when the tools shift.

Can the 16 Questions framework apply outside Lean Six Sigma?

Yes. The question structure is methodology-agnostic. Teams using PDCA, A3 problem solving, or hybrid improvement approaches can apply the question discipline without abandoning their preferred framework. The 16 questions describe what improvement work needs to surface. The methodology choice describes how the surfacing happens.

Where can practitioners learn more about the framework?

The framework is documented in VRI's productized toolkit at toolkit.variancereduction.com and embedded in PrysmCore Mentor, an AI guidance system that uses the 16 questions as its mentoring backbone for active improvement projects. Both make the question-first discipline accessible to practitioners working independently between formal training events.

Is the 16 Questions framework copyrighted?

Yes. The framework is federally registered under U.S. Copyright Office registrations TXu 1-802-923 (1999/2010) and TXu 1-910-433 (2014). Variance Reduction International holds the registrations. Verification is publicly searchable through the U.S. Copyright Office records database at publicrecords.copyright.gov.

Tools-first teaches compliance. Questions-first teaches thinking.

Excellence follows the discipline of asking better questions before reaching for better tools.